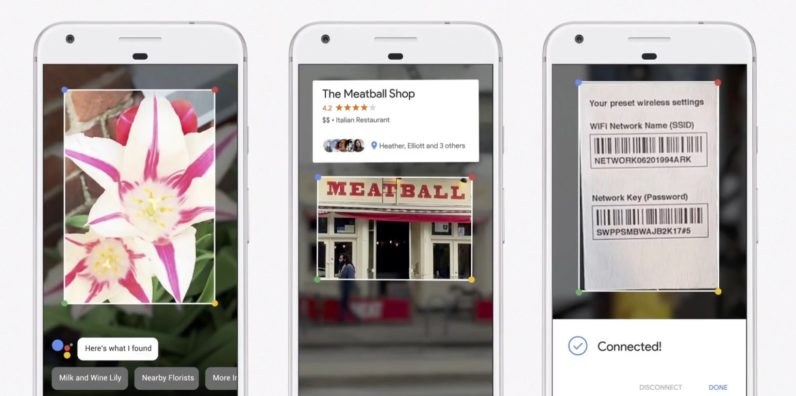

Google introduces Lens, an AI in your camera that can recognize objects

Google announced at it’s I/O conference today that it’s working on a new form of AI called Google Lens, which understands what you’re looking at and can help you by providing relevant responses. With Google Lens, your smartphone camera won’t just see what you see, but will also understand what you see to help you take action. #io17 pic.twitter.com/viOmWFjqk1 — Google (@Google) May 17, 2017 With Lens, Google’s Assistant will be able to identify objects in the world around you and perform actions based on Google’s various apps. For example, Lens can help you identify a flower you’re looking at…

This story continues at The Next Web

Or just read more coverage about: Google